Because even the best and brightest make mistakes, it's easy to pin the root cause as "human error." It certainly happens often enough. Technically, an error is defined as a human action that unintentionally departs from expected behavior. Under normal conditions, we can make between three to seven errors per hour. Under stressful, emergency, or unusual conditions, we can make an average of 11 errors per hour.

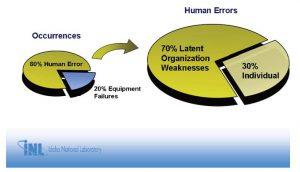

But why do we make errors? Is it the individual's fault? A recent presentation by the Idaho National Laboratory showed following:

Latent organizational weaknesses include work processes, and, as the above shows, such work processes usually are behind human error. Why did the error occur? The procedure wasn't followed. Why? Human error. Why was there human error? The work process needs improvement.

Sometimes, human error proves just how good some workers are. At the beginning of a root cause analysis, it's not uncommon to hear someone say: "Bob has been calibrating these instruments for 20 years and he just screwed up." Though it may seem like finger-pointing, it's actually the ultimate compliment, and the incident investigation facilitator should recognize it. Think about the math. Bob has performed this task twice a week, 100 times a year for 20 years. That's 2,000 calibrations and this is his first significant error? Error rates of just 1/1000 are considered exceptional, and Bob beat this by a long shot.

Does this warrant a root cause analysis at all? It may, because incidents rarely if ever have just one cause. Are we absolutely sure that Bob's mistake was the only reason the incident occurred? Dig deeper and you likely will find there's more to the problem than Bob's once-in-an-eon snafu.

Beyond Blame

If we stop at "Procedure Not Followed," the usual response is to blame a person. Blame is easy and does not focus on the process. Let's face it Procedure Not Followed is a simple (albeit oversimplified) explanation of confusing and complex problems. It also requires little or no work from anyone in an organization except the person who made the mistake. How does this make the person feel? Not listened to, unappreciated and, eventually, apathetic, which isn't good for anybody.

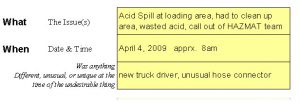

The key to getting beyond the procedure-not-followed conundrum in a root cause analysis is obtaining detail, and it's here where the Cause Mapping facilitator plays a key role. During the brief kickoff meeting that can start an incident investigation, the facilitator asks the group about its objective along with general questions about the incident. Expect different perspectives.

Make sure everyone can see what is being written by using a whiteboard, flip chart, or a laptop and a projector. Ask how this incident impacted the organization's overall goals (that is, goals everyone agrees on, like zero safety incidents), and start developing a top-level Cause Map.

Some situations may involve reprimand or discipline. The root cause analysis facilitator must emphasize this is a technical incident investigation, not a disciplinary action. The steps taken are relevant; who did what isn't. No names, no blame.

Different approaches to incident investigations work better than others, depending on the facilitator and organization. If people tell me a certain technician made the error, I may not always meet with that person first. In my mind, the person didn't cause the error; he's simply the one closest to the incident and, for that reason, probably knows some important detail. But because finger-pointing pervades our culture, he might not share the necessary information if I come to him without developing a simple version of the work process.

If I come to that person after talking with managers, supervisors, and others further removed from the incident, and show him the information I already have, he likely will fill in further details and perhaps even correct some information. I also try to obtain a copy of the procedures (if available) and, from it, draw out a process map. Most important, focusing on information I already have draws attention away from the person and toward the detail on the Cause Map: causes, effects, and supporting evidence. We try to come into these meetings with enough information so we can use the time available as efficiently as possible.

The worst approach, perhaps, is to march directly to the person who supposedly committed the error (eerily close to committed the crime) and ask, Why did you not follow the procedure? Answering this, the technician likely will rebuff. I don't know. I was having a bad day. In his mind, the more he says, the more he's liable. His goal often is to emerge from this incident investigation unscathed. Without enough information, the Cause Map stops at Did Not Follow Procedure, which ultimately helps no one.

Process details, on the other hand, help everyone, and to get them requires some interviewing skills. As a facilitator, I often introduce ignorance. In other words, I ask people to explain what may seem obvious. After they describe it, I sometimes ask them to explain certain aspects again. I may have understood them, but the more details they introduce, the better. It also gets the conversation away from who did it and toward why did the incident occur?

Sensitive Circumstances

Mistrust and miscommunication may make it difficult to dig deeper than Procedure Not Followed. As a previous industrial person, I'm guilty of this. Early in my career, when a breakdown occurred, in the back of my mind I would think the operators were actually trying to break the equipment. But rarely do people sabotage an organization. In fact, most want to do good work, if not for organizational loyalty then at least for personal pride. For this reason, incident investigations should always start from a perspective that people in the organization did not intend to do a bad thing. People want things to go well; some just put more effort into making things go well.

This can be difficult to get past in sensitive circumstances. Consider the Air Force mishap in 2007 in which crews actually lost track of six live nuclear warheads for about 36 hours. A B-52H bomber flew across the Unites States with six live warheads under its wings. The bomber was transporting cruise missiles designed to carry the warheads from Minot Air Force Base in North Dakota to Barksdale Air Force Base in Louisiana for disposal. The plane carried 12 missiles in the open air, six under each wing. All 12 were supposed to have dummy training warheads. Six did, but the other six had live warheads, which together had 60 times the power of the Hiroshima bomb.

The bombs had safeguards on them to protect against detonation, so the risk of an explosion was slight. The real danger was that these warheads were parked overnight in Minot for 15 hours and, upon reaching Barksdale, left to sit for nine more hours without special guard.

That's scary stuff, and it certainly made for splashy headlines. If you read the media coverage, you can see how finger-pointing and talk of punishment dominated early, while procedural changes were considered only after a series of exhaustive incident investigations. Early on the Sunday Times quoted one Air Force official saying, This was an unacceptable mistake and a clear deviation from our exacting standards.

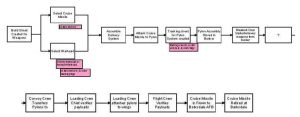

Put another way: The procedure wasn't followed. A much larger review of procedures ensued, not only for this event but for all nuclear weapons handling procedures. Emotion and drama engulfed the event for understandable reasons. Just imagine, though, if the initial reaction wasn't so dramatic but instead focused on the procedure at hand. The process map might have looked something like this:

No names; no blame; no punishments; no colorful language used by newspaper reporters and finger-wagging politicians. A process map has no personality and no bias. It objectifies the incident investigation and gets people focusing on the process. Looking at such a map, the eyes go right to the question box with the question mark, the unknown. There's the focus the steps involved, what happened.

Note that this linear map just covers the process what happened, not the why. But when a process map is used together with a Cause Map, the approach can uncover some questions. Why are nuclear warheads and the dummy training warheads stored in the same bunker? Why, at a cursory glance, do training and dummy warheads look identical? Why are the missiles transported externally, instead of inside a transport vehicle? For that matter, why do the missiles need any warhead real or fake?

It turns out there are valid reasons for most of these questions, but the point is this: The process and Cause Maps turn focus away from blame to allow an organization to go beyond Procedure Not Followed. It boils down everything to the steps taken, causes, and effects, and de emphasizes individual personalities and emotions.